|

Fudan University Google

|

|

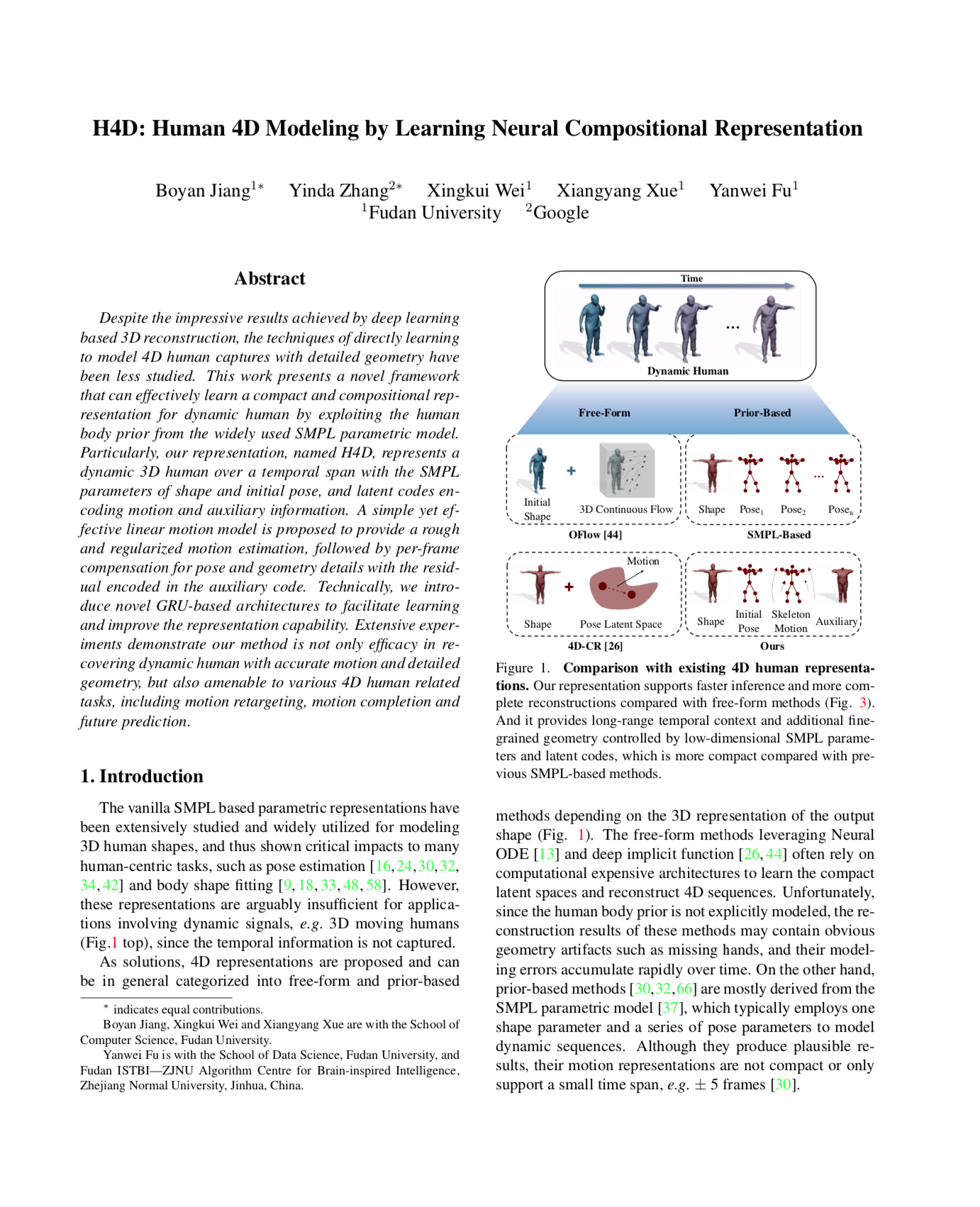

Overview of Our H4D Representation. We introduce a compact and compositional representation for 4D human,

which represents a temporally dynamic human with the low-dimensional SMPL parameters for body shape and initial pose, and the latent codes

for skeleton motion and auxiliary information.

|

Despite the impressive results achieved by deep learning based 3D reconstruction, the techniques of directly learning to model 4D human captures with detailed geometry have been less studied. This work presents a novel framework that can effectively learn a compact and compositional representation for dynamic human by exploiting the human body prior from the widely used SMPL parametric model. Particularly, our representation, named H4D, represents a dynamic 3D human over a temporal span with the SMPL parameters of shape and initial pose, and latent codes encoding motion and auxiliary information. A simple yet effective linear motion model is proposed to provide a rough and regularized motion estimation, followed by per-frame compensation for pose and geometry details with the residual encoded in the auxiliary code. Technically, we introduce novel GRU-based architectures to facilitate learning and improve the representation capability. Extensive experiments demonstrate our method is not only efficacy in recovering dynamic human with accurate motion and detailed geometry, but also amenable to various 4D human related tasks, including motion retargeting, motion completion and future prediction.

|

B. Jiang, Y. Zhang, X. Wei, X. Xue, Y. Fu

H4D: Human 4D Modeling by Learning Neural Compositional Representation CVPR 2022 [arXiv] [GitHub] |

Video |

|

|

|

|

Acknowledgements

This work was supported in part by NSFC Project (62176061),

Shanghai Municipal Science and Technology Major Project (2018SHZDZX01).

The corresponding authors are Xiangyang Xue, and Yanwei Fu.

The website is modified from this template.

|